show that in setups with two agents and a very simplistic demand model, such equilibria are found reliably. Instead, both agents deviate around the equilibrium slightly. At some points, pseudo-solutions are found, in which no exact equilibrium is achieved. Q-learning does converge toward such equilibria. In such a setup, there are several equilibria, in which very similar pricing policies are used by both agents and stay unaltered. They analyze a scenario with two agents using tabular Q-learning to learn pricing policies in a duopoly setup. Kephart and Tesauro ( 2000) use Q-learning in multi-agent scenarios with a demand model in which the reward is a function that mostly relies on the price rank. They use Q-learning only with discount factors being set to zero, making it unsuitable for problems where long-term effects do matter. They observe that Q-learning does find near-optimal policies, though being slower than specialized alternatives. They used Q-learning to implement a pricing bot in that scenario. ( 2003) present a multi-agent market model, in which the seller has to determine both the offer price and the number of goods produced in a setting with another competitor that dynamically makes the same decision. Many examples are available where RL has been applied to different market models. While RL is well known in the community, publications around it are scattered and broad, as observed by den Boer ( 2015). The “ Conclusion” section concludes the paper. The “ Application in oligopoly competition” section considers oligopoly settings. The “ Competition between different self-adaptive learning strategies” section shows experiments, where two RL systems directly compete against each other. In the “ Price collusion in a duopoly” section, we investigate the formation of price collusion. In the “ Performance in duopoly environments” section, we study RL strategies in different duopoly settings against deterministic and randomized strategies. The “ RL algorithms” section gives an overview of RL algorithms. The “ Related work” section discusses related work. We show that RL strategies can be successfully applied in oligopoly scenarios. We study RL strategies regarding their tendencies in a duopoly to form a cartel. We compare their performance compared to optimal strategies in tractable duopoly settings derived by dynamic programming techniques. We compute self-adaptive pricing strategies using DQN and SAC algorithms. In this paper, we analyze how far RL algorithms can be used to overcome the limitations of dynamic programming approaches to solve dynamic pricing problems in competitive settings. ( 2018), rely on directly tuning a parametrized strategy and use value estimations only to guide updates of the policy. Other approaches, like Soft Actor Critic (SAC), see Haarnoja et al. It estimates the expected reward of pursuing a certain action and searches for the action with the highest expected reward. 2015) is a commonly used example of classical RL algorithms. A few examples of them being applied to other pricing problems are available as well, see Kephart and Tesauro ( 2000), Kim et al.

They have been used for various problems in the past, for example, video games and robotics. They are problem independent and require only hyperparameter tuning to be set up. RL offers algorithms, that are focused around solving games by maximizing a reward stream offered by the game. Duopoly markets offer the advantage, that optimal solutions can still be computed via dynamic programming (DP), cf., e.g., Schlosser and Richly ( 2019), which provides an opportunity to compare and verify the results of reinforcement learning (RL). We analyze two examples of such markets focusing on durable and replenishable goods: (i) duopolies, that require the agent to compete with a single competitor and (ii) oligopolies with multiple active competitors. While it is possible to use simple pricing strategies and tune their parameters manually, this raises the question of whether it is possible to provide an automated solution for such problems.

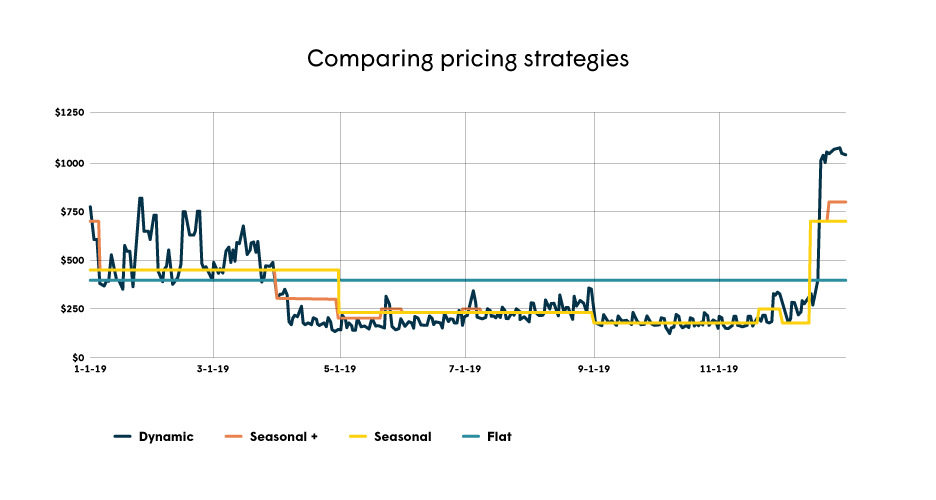

Those price updates might occur at a high frequency. Many traders nowadays can make use of dynamic pricing algorithms, that automatically update their price according to the competitors’ current offers. If your goods’ prices are way off the competition, customers might go for cheaper competitors or ones that offer a better service or a similar product. In modern-day online trading on large platforms using the correct price is crucial.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed